The last cool thing in this Oculus Pointer Trilogy post is how to work with Coherent UI Web Views using the Oculus Pointer we made in post 1

To see how to select 3d GameObjects (like buttons) check out post 2

____________________________________________________________

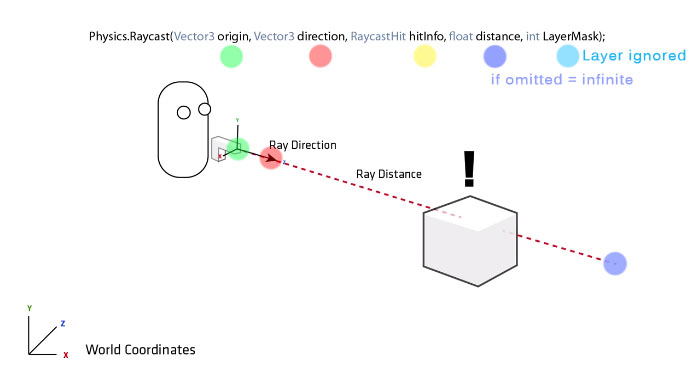

RaycastsIf you read Post 2, you'll remember I mentioned that Raycasts are kind of backward from the way you normally think of input going (player-out). Fortunately Coherent UI is pretty much self-contained so we can put the Raycast in the UI script.

Basically: we're going have Coherent UI respond to the pointer, rather than having the pointer trigger Coherent UI.

Why?

A combination of reasons coming together to a single solution

- Unity mouse function actually IS a back-end Raycast

- Coherent UI calculates what you clicked on based on the view texture's x/y coordinates

- RaycastHit.textureCoord is the only way I know to find and send the texture x/y

____________________________________________________________

Coherent UI System

This is the script that comes with your Coherent UI system that we'll be adding to in order to do this little trick. I won't be showing the full altered script, but I'll give you the placements for our entered code snippets.

____________________________________________________________

Public VariablesThe first thing to note when you're looking at the Coherent UI System script in the editor is that almost all of it's values are private. We can't see them, therefore can't check mid-UI navigation.

It's also worth noting all those specialized CoUI variables don't share well between functions.

You're going to want to add a few public variables for you to work with inside and outside the script. I've made comment lines on either side to make it obvious where I've changed the script.

____________________________________________________________

Finding Your Assets

We're going to do this in two ways. OnLevelWasLoaded(), which we'll add, and through Start(), which we'll add to. This will tell the script whether or not to look for Oculus interaction

Here I have it looking for any of the Oculus prefabs I use that could interact with Coherent UI. If any are present, expect to interact with an Oculus pointer

- Make sure to use your own asset names

____________________________________________________________

void TrackInputFocus ()This is a void() that runs every Update cycle. Find it, we're going to put our Raycast here.

The script:

Note

- See that hanging "} else {" at the bottom? That closes "if (oculus) {"

- don't forget to close if after the end of the next (original) raycast

The Logic:

What we're doing here is

- Defining a ray from the right eye out through the pointer. This defeats the distortion caused by the Oculus program.

- Making a Raycast with that ray

- If the raycast hits a CoUI view, it records the x/y coordinate hit on the view texture and responds accordingly

- If the mouse is clicked while the raycast is pointed, it will click that point on the view.

____________________________________________________________

Mouse Position

There's one last thing we need to do at the end of the script to update the mouse position in Oculus mode.

____________________________________________________________

Final Step

There's a special way to set up your Oculus pointer-using scenes.

This is actually remarkably simple and mostly copied from other bits of the same function. The really tricky part was identifying the texture coordinate technique. The rest is just variable management. :D

There's a special way to set up your Oculus pointer-using scenes.

- Remove the OVR Manager script from your Oculus player

- Make a GameObject tagged MainCamera that does not have a camera component on it

- Put the OVR Manager script on that

This arrangement was found by trial and error. I know only that it has something to do with Coherent UI needing a Main Camera and getting bugs doing it other ways.

____________________________________________________________

This is actually remarkably simple and mostly copied from other bits of the same function. The really tricky part was identifying the texture coordinate technique. The rest is just variable management. :D

Tadaa!

____________________________________________________________

All blog posts by UnityGirl are inspired by my work with Brunelleschi: Age of Architects on the Aesop Games team. Download the current Unity client On IndieDB